News

ML Process Lifecycle – Part 1: What It Is and Why We Need It

This is the first part of a three-part series on the ML Process Lifecycle. Read Part 2 and Part 3.

Machine Learning (ML) has been experiencing explosive growth in popularity due to its ability to learn from data automatically with minimal human intervention. As ML is implemented and applied more in business settings, ML practitioners need to develop methods to describe the timing of their project work to their employers or clients.

One tool which is particularly useful in this regard is the ML Process Lifecycle, a process framework adapted by the Amii team (see note below). In this three-part blog series, we will be exploring what it is, why it’s important and how you can implement it.

What is the MLPL?

The ML Process Lifecycle (MLPL) is a framework that captures the iterative process of developing an ML solution for a specific problem.

ML project development and implementation is an exploratory and experimental process where different learning algorithms and methods are tried before arriving at a satisfactory solution. The journey to reach an ML solution that meets business expectations is rarely linear – as an ML practitioner advances through different stages of the process and more information is generated or uncovered, they may need to go back to make changes or start over completely.

The MLPL tries to capture this dynamic workflow between different stages and the sequence in which these stages are carried out.

Where does the MLPL fit?

When business organizations develop new software systems or introduce new features to existing systems, they go through two major phases:

- Business analysis: making assessments and business decisions regarding the value and feasibility of a new software product or feature; and

- Product development: developing the solution (usually following one of the existing software development methodologies) and putting it in the production.

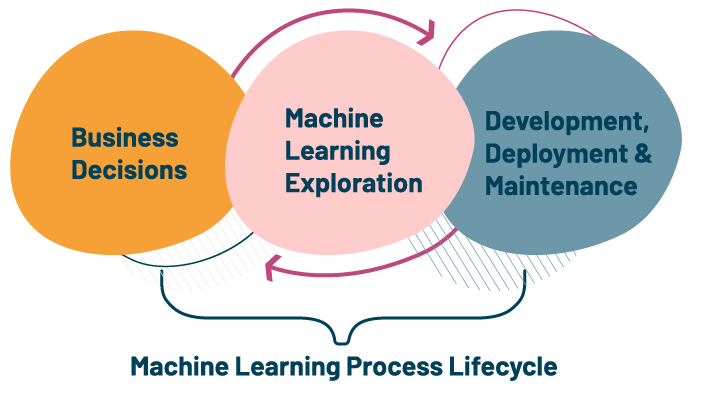

However, when an organization thinks about adopting ML – either to complement their current software products/services or to address a fresh business problem – there is an additional exploration phase between the business analysis and product development phases. The MLPL streamlines and defines this process.

The MLPL is an iterative methodology to execute ML exploration tasks, generalizing the process so that it is flexible and modular enough to be applied to different problems in different domains, while at the same time having enough modules to fully describe relevant decision points and milestones.

What does an ML Exploration Process entail?

Ideally, an organization would want to know all possibilities and consequences of an ML solution before introducing it into a system. The ML Exploration Process seeks to determine whether or not an ML solution is the best business decision by addressing the following questions:

- Can ML address my business problem?

- Is there a supporting data?

- Can algorithms take advantage of the data?

- What is the value added by introducing ML?

- What is the technical feasibility of arriving at a solution with ML?

What doesn’t the MLPL capture?

When an organization first begins to think about adopting ML, often the first thing it will do is perform a business analysis. This involves identifying business workflows, business problems, resource assessment, identifying tasks and decision points which ML solutions could fit into and return business value. The MLPL does not capture all aspects of this, only addressing those pieces which directly impact ML problem definition.

After ML Exploration is complete, an organization may decide to develop the ML solution into a product or service as a tangible component, deploying it into production and maintaining it. This phase is also not captured in the MLPL.

The MLPL only deals with the exploration phase where different methods are tried to arrive at a proof-of-concept solution which can be later adapted to develop a complete ML system.

Why do we need the MLPL?

We have seen an overview of what the MLPL captures and what it does not. But why do we need a process to capture an exploration task? There are a few important reasons why an organization should use the MLPL:

- Risk Mitigation: The MLPL standardizes the stages of an ML project and defines standard modules for each of those stages, thereby minimizing the risk of missing out on important ML practices.

- Standardization: Standardizing the workflow across teams through an end-to-end framework enables the users to easily build and operate ML systems while being consistent, and allows the inter-team tasks to be carried out smoothly.

- Tracking: The MLPL allows you to track the different stages and the modules inside each of the stages. This being an exploration task, there are a lot of attempts that will never be used in the final ML solution, but have required significant investment. The MLPL allows you to track the resources that have been spent on these experiments and to evaluate for future iterations.

- Reproducibility: Having a standardized process enables an organization to build pipelines for creating and managing experiments, which can be compared and reproduced for future projects.

- Scalability: A standard workflow also allows an organization to manage multiple experiments simultaneously.

- Governance: Well-defined stages and modules for each stage will help in better audits to assess if the ML systems are designed appropriately and operating effectively.

- Communication: A standard guideline helps in setting the expectations and effectively facilitate communication between teams about the workflow of the projects.

In Part 2 of the MLPL Series, we take an in-depth look at the MLPL framework and go through the key aspects of each stage.

Amii’s MLPL Framework leverages already-existing knowledge from the teams at organizations like Microsoft, Uber, Google, Databricks and Facebook. The MLPL has been adapted by Amii teams to be a technology-independent framework that is abstract enough to be flexible across problem types and concrete enough for implementation. To fit our clients’ needs, we’ve also decoupled the deployment and exploration phases, provided process modules within each stage and defined key artifacts that result from each stage. The MLPL also ensures we’re able to capture any learnings that come about throughout the overall process but that aren’t used in the final model.

If you want to learn more about this and other interesting ML topics, we highly recommend Amii’s recently launched online course Machine Learning: Algorithms in the Real World Specialization, taught by our Managing Director of Applied Science, Anna Koop. Visit the Training page to learn about all of our educational offerings.

Latest News Articles

Apr 8th 2024

News

Cracking the Conference Code

Amii Fellows share tips on how to make the most of your conference experience.

Mar 26th 2024

News

How Chat GPT Ruined Alona’s Christmas | Approximately Correct Podcast

In this month's episode, Alona talks about how ChatGPT changed the public’s perception of what AI language models can do, instantly making most previous benchmarks seem out of date, and the excitement and intensity of working in a fast-moving field like AI.

Mar 18th 2024

News

Google Canada announces new research grants to bolster Canada’s AI ecosystem

Google.org announces new research grants to support critical AI research in Canada focused on areas such as sustainability and the responsible development of AI. The grant will provide a total of $2.7 million in grant funding to Amii, the Canadian Institute for Advanced Research (CIFAR) and the International Center of Expertise of Montreal on AI (CEIMIA).